Using the action

This action is available from the Tools menu as show in the below screenshot.

Let's go into detail about the fields on Run Script.

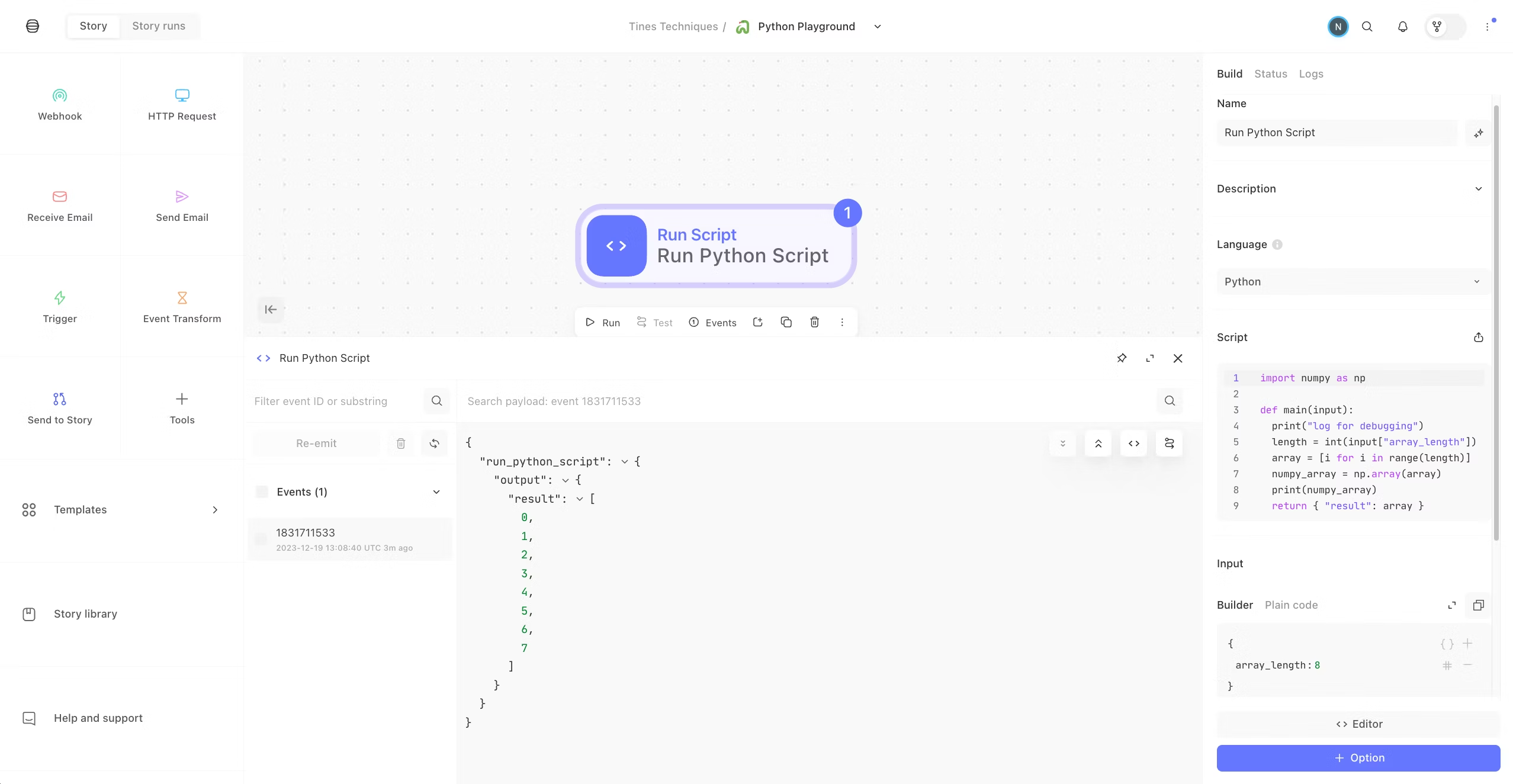

Script

This is where to place the Python code you would like to execute.

If there are any dependencies to import for your script, declare them first as you normally would.

Define your main function and pass any inputs by using def main(input): and then write your script.

Important: you must define a main function in your script.

Define your return in the format of a JSON object. This will be the content of the event that gets emitted from the action. For example, this is the output of the sample script in the template.

Output of script

Inputs

These are the values that will be passed to your script at runtime. You can use pills in the builder to refer to any data from other actions upstream in your story.

To refer to these values in your script by calling input["<<object_name>>"].

In the template action, note that Input has a key named array_length. So, in the Script section, this is called with input["array_length"].

Requirements

List the dependencies required to run your script in the format of a requirements.txt file with each requirement separated by a new line. Example:

numpy==1.25

http==0.02Timeout

Time in seconds to wait before terminating the script. The default is 10 and the maximum is 110.

Networking mode

Since we are making requests from AWS Lambda, egress IPs are different than the ones the tenant uses (e.g. to make HTTP requests from the HTTP Request action). We support three networking modes:

Standard: the egress IP address is subject to change and shared with other customers.Dedicated: the IP addresses are static and can be found for your tenant by visiting<<tenant-domain>>/info, underlambda_egress_ips. These IP addresses are shared with other customers unless you are using a dedicated tenant.No networking: the script will not have internet connecitivty.

AWS Support

With Run Script you can utilize Python scripts with the Python AWS SDK (boto3) or the AWS CLI to interact with AWS services. Before you begin, you will need to attach a Tines AWS Credential to your Run Script with the required permissions.

AWS IAM Role

You can start by creating an AWS IAM role which should have permissions to execute AWS API calls on your behalf and should be assumable by an AWS Lambda function from Tines AWS account.

To get started, follow please this doc to setup a Tines AWS Credential. Once you have set it up, you will then need to make sure that the Trust Relationship for the IAM Role in your AWS account matches the following, where TinesAwsCredentialGeneratedID is the External ID from the Tines AWS Credential you created. We recommend allowing assume role requests from both our AWS accounts.

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Principal": {

"AWS": "857223745291"

},

"Action": "sts:AssumeRole",

"Condition": {

"StringEquals": {

"sts:ExternalId": "TinesAwsCredentialGeneratedID"

}

}

},

{

"Effect": "Allow",

"Principal": {

"AWS": "825838939522"

},

"Action": "sts:AssumeRole",

"Condition": {

"StringEquals": {

"sts:ExternalId": "TinesAwsCredentialGeneratedID"

}

}

}

]

}

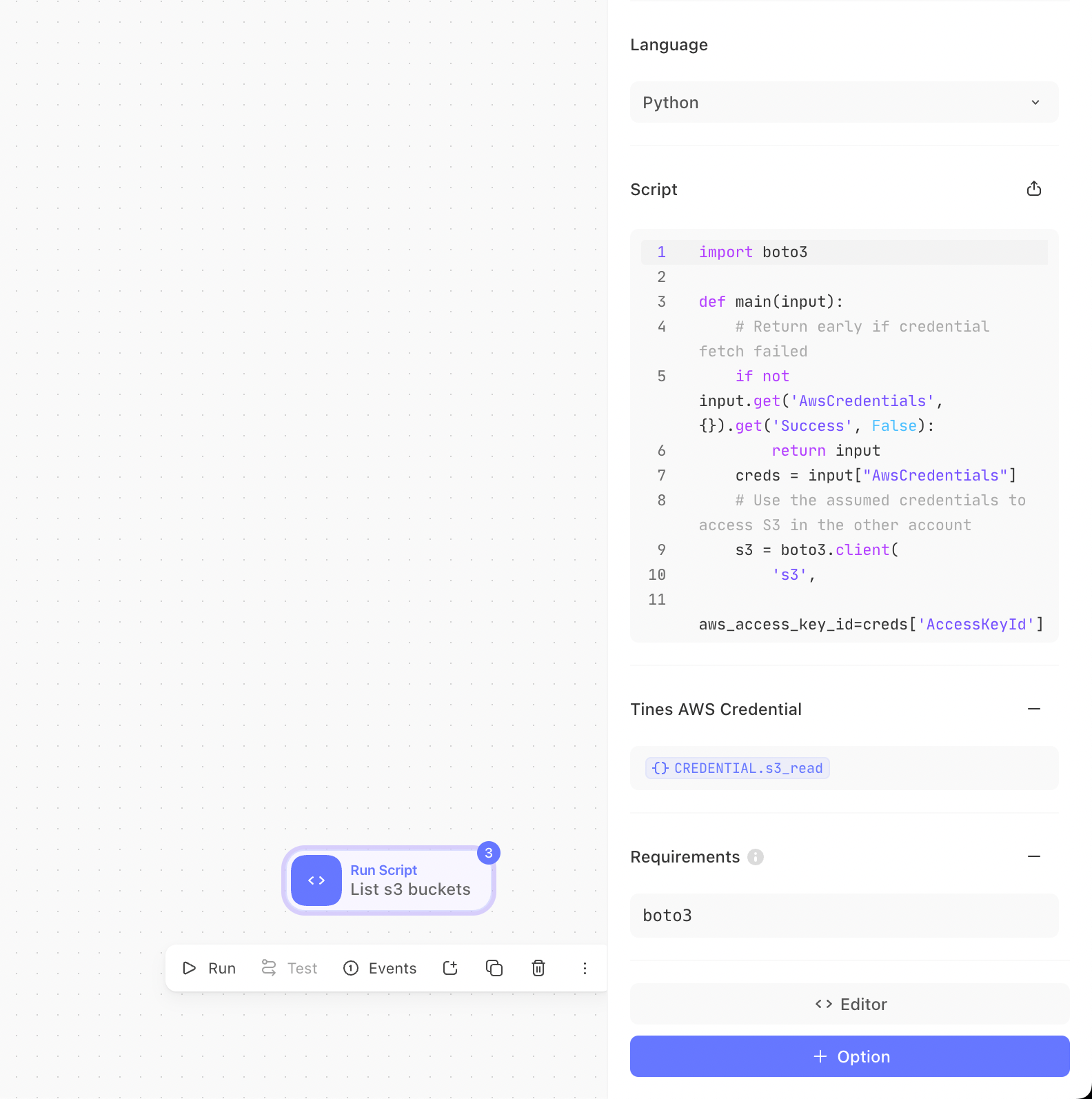

After this you can attach any permission policy you wish to for the AWS API calls you will be making from the Run Script action. Once you have added the IAM role ARN of the newly created IAM in Tines AWS Credential, you can then reference the same Credential in the Run Script Action like the following image.

AWS Python SDK Support (boto3)

You can now start using Boto3 to interact with AWS APIs securely. The necessary credentials, retrieved from the IAM role you specified, will be included in the input event of your function under input['AwsCredentials']. Below is a example Python function example that lists S3 buckets.

import boto3

def main(input):

# Return early if credential fetch failed

if not input.get('AwsCredentials', {}).get('Success', False):

return input

creds = input["AwsCredentials"]

# Use the assumed credentials to access S3 in the other account

s3 = boto3.client(

's3',

aws_access_key_id=creds['AccessKeyId'],

aws_secret_access_key=creds['SecretAccessKey'],

aws_session_token=creds['SessionToken'],

)

# List buckets

buckets = s3.list_buckets()

return {'buckets': [bucket['Name'] for bucket in buckets['Buckets']]}AWS CLI Support

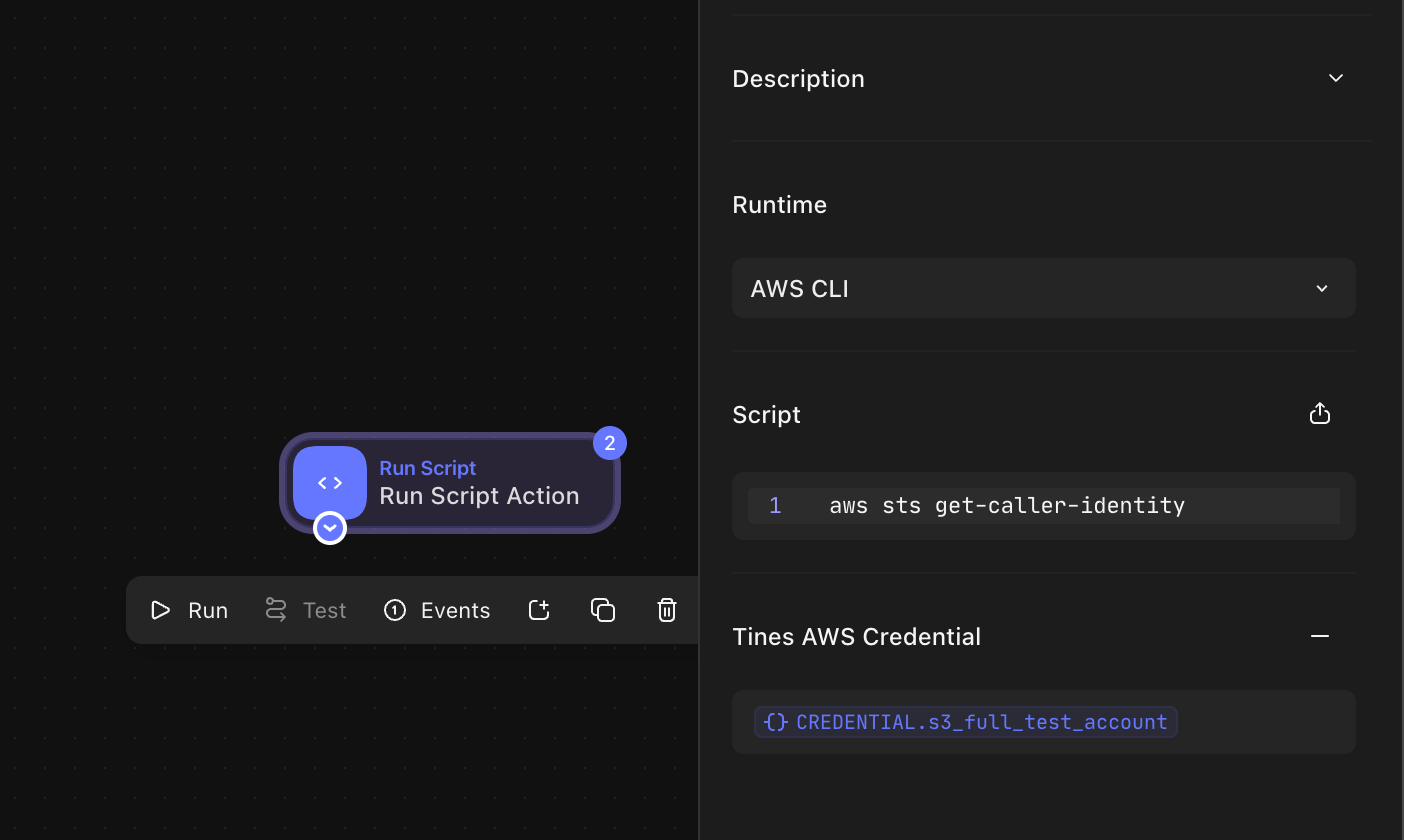

Alternatively, you can also use directly use the AWS CLI Runtime in your Run Script action and use aws cli to interact with AWS services. Example:

aws sts get-caller-identity

Python in Tines: How it works

Tines runs in AWS, and we make creative use of their underlying services so that we do not actually process any Python in the application. This is great for multiple reasons, but here are the top 2:

Your code is executed in a secure, regulated environment, running in the same region as your tenant.

We did not have to update (and will not have to maintain) any of our code to support running Python in our Ruby-based platform. Granted, that is a selfish reason, but it means we get to spend more cycles building out more amazing features.

Behind the scenes

Your cloud tenant has a role that allows it to create or invoke Lambdas in our AWS environment, adjacent to your tenant. Each Lambda is only accessible by the tenant that created it.

The Lambda itself has a limited role assigned, so your Lambda has zero access to any other AWS resources.

Any packages in the Requirements will be built at runtime as Lambda Layers.

On the first run of your Python script action, you may notice a short delay while your Tines tenant dynamically builds the Lambda function required. Any subsequent requests should respond promptly.

Limitations

Run Script Action is currently limited to the

python3.9runtimeRun Script Action is available for self-hosted but there may be some configuration needed - for more details, see Run script for self-hosted.

As we are using AWS Lambda under the hood for

mode: "cloud", we are subject to their quotas on script execution. Specifically:Requirements have a package limit of

250MBin unzipped sizeThe maximum payload you can pass to the function is 6 MB (including the size of the code).

The maximum output of the function is 6 MB.

The disk space available for ephemeral storage is limited to 756 MB.

Please refer to the AWS docs for more details.

Best practices

Pull the script from your code repository. While Tines has version control and change management for your stories, we are an automation platform, not a code repository. When dealing with scripts, it is best practice to keep your code in a managed repository. Luckily, Tines makes it easy to call out to your repository of choice so you can maintain proper code hygiene and integrate your script with the rest of your Tines story.

Make your scripts idempotent if possible. While we attempt to run script actions exactly once, they may need to be retried. This means that if the function has side-effects (e.g. making a call to an external service), those could occur more than once.

Depending on the nature of your scripts, you may not want all members of your Tines tenant to have direct access to them. In that case, we recommend using a separate Tines team to create a more isolated environment where only certain users have access. You can then configure any of the script workflows as send to stories. This would allow other teams in your tenant to call the send to story to execute a script and receive the output, but not permit access to the actual script itself.