At Tines, we power important workflows for some of the most demanding teams in the world, and for years, that always meant supporting deterministic, auditable automation. But as reasoning models have matured, our customers have started asking a different question: what if the workflow itself could reason?

At this point the use cases were immediately obvious, from summarizing alerts, to enriching data, or drafting responses, all of the workflows needed to feel seamless with the storyboard, and not bolted on.

Always starting with trust

Tines was born in security, and it's become one of the central tenets in how we build product; security is non-negotiable. The first AI features we launched were built on AWS Bedrock in order to always maintain customer data within the same geographic regions as their tenant, as well as out of any LLM training pipelines.

As we know, the AI landscape moves fast, extremely fast. Within months of launch, we had builders asking to bring their own models, from OpenAI for familiar tooling, Mistral for open-source and European models, self-hosted endpoints for air-gapped environments, etc.

The question then became: how do we support any and all providers without turning our codebase into a patchwork of provider-specific logic?

The generator–client split

Our answer?

A clean split between the features we were building, and the manner in which requests were being made to the providers.

ℹ️Info

Every AI feature in Tines implements a Generator interface. This standardizes requests to different providers regardless of their expected inputs, and improves the developer experience for shipping new AI features. Engineers don't need to be experts in communicating with different AI providers.

module AiGeneration::Generators::Generator

# The guidance given to the model

sig { abstract.returns(String) }

def instructions; end

# Conversation history

sig { abstract.returns(T::Array[AiGeneration::Message]) }

def messages; end

# Available tools the model can call

sig { abstract.returns(T::Array[AiGeneration::Tool]) }

def tools; end

# Optional JSON schema to enforce structured output

sig { abstract.returns(T.nilable(T::Hash[String, T.untyped])) }

def output_structure; end

# Post-process the raw model output

sig { abstract.params(output: T.nilable(String)).returns(T.nilable(String)) }

def normalize(output); end

endEach generator also declares its configuration requirements—timeout, temperature—whether it needs a reasoning-capable model:

class Config < T::Struct

const :model_type, AiModelType # SMART, FAST, or CUSTOM

const :temperature, Float

const :reasoning_effort, T.nilable(ReasoningEffort)

const :timeout, T.nilable(Integer)

endThe generator never knows which provider is on the other end. That's the job of GeneratorRunner.

Smart vs. fast: model selection at runtime

Not all AI tasks are equal and require different/varying levels of performance characteristics. For example, summarizing an alert requires speed; complex reasoning over a noisy dataset needs depth. We encode this distinction directly into the model type enum, and resolve the actual provider at runtime:

@tenant_ai_provider =

case config.model_type

when AiModelType::FAST then AiGeneration::Helpers.ai_provider_for_model(@tenant, :fast)

when AiModelType::SMART then AiGeneration::Helpers.ai_provider_for_model(@tenant, :smart)

when AiModelType::CUSTOM then @generator.tenant_ai_provider

end

@client =

case provider_name

when :aws_bedrock then AiGeneration::Clients::AwsBedrock.new(...)

when :anthropic then AiGeneration::Clients::Anthropic.new(...)

when :open_ai then AiGeneration::Clients::OpenAi.new(...)

when :mistral then AiGeneration::Clients::Mistral.new(...)

else raise DisabledError, "No AI provider enabled"

endBy having this delineation, we’re able to build better experiences in our product in areas like our AI-chat interface Workbench: in each conversation before sending a user's full request to a smart model, we use a fast model to pre-select the relevant tools from their library. If we had hardcoded the model IDs, large tool libraries flood the context window and degrade response quality.

The provider client interface

Each provider wraps its SDK behind a common interface. The core contract is InvokeParameters in and a Result out:

class InvokeParameters < T::Struct

const :instructions, String

const :messages, T::Array[AiGeneration::Message]

const :tools, T::Array[AiGeneration::Tool]

const :model, String

const :temperature, T.nilable(Float)

const :output_structure, T.nilable(T::Hash[String, JSONSchemaValue])

const :reasoning_effort, T.nilable(ReasoningEffort)

endEach provider also implements a streaming and synchronous path to handle the messages sent by the LLM. When a new provider arrives, the work is entirely self-contained: implement the interface, handle the SDK quirks, register the provider in GeneratorRunner.

Enforcing structured output

While AI is good at generating text, workflows often need structured data.

We solve this by adopting an approach that works across nearly every provider; we define a special tool called generate_json_output, inject the user's desired JSON schema as its input specification, and force the model to call that tool via tool_choice:

# Build the tool from the caller's schema

def self.from_output_structure(schema)

AiGeneration::Tool.new(

name: "Generate JSON Output",

slug: "generate_json_output",

description: "Generate a JSON response following the specified schema",

inputs: [{ name: "output", type: "object", json_schema: schema, required: true }],

)

end

# Force the model to call it (OpenAI-compatible providers)

tool_choice: parameters.output_structure.present? ?

{ type: "function", function: { name: "generate_json_output" } } : nil

# Same pattern on Bedrock's Converse API

request_parameters[:tool_config][:tool_choice] = { tool: { name: "generate_json_output" } }The model has no choice but to return a valid JSON object matching the schema. The output feeds directly into the next action in the story.

Handling API diversity

The phrase "OpenAI-compatible" covers a wide spectrum, and building for multiple providers means building for that variance. Many customers already have approval for specific providers and if we support the variance of those providers, customers can more easily adopt our features.

The clean cases are satisfying. Mistral's API is sufficiently compatible that the entire client is a subclass with a URL override:

# Mistral client that inherits from OpenAi since Mistral's API is OpenAI-compatible.

# Only overrides provider identity, configuration, model discovery, and the default API endpoint.

# All invoke/streaming/error handling logic is inherited from the OpenAi chat completions flow.

class AiGeneration::Clients::Mistral < AiGeneration::Clients::OpenAi

PROVIDER = :mistral

DEFAULT_URI_BASE = T.let("<https://api.mistral.ai/v1/>", String)

def self.build_client(api_key:, ..., options: {}, ...)

options["api_endpoint"] ||= DEFAULT_URI_BASE

super

end

endOther providers diverge more deeply. AWS Bedrock uses a different wire format, a different tool-calling schema, and a different authentication mechanism entirely. We handle those through separate translation layers that get selected alongside the client:

@workbench_translation =

case provider_name

when *OPENAI_COMPATIBLE_PROVIDERS then Workbench::OpenAiTranslation

when :aws_bedrock then Workbench::ConverseTranslation

when :oracle_cohere then Workbench::OracleCohereTranslation

else Workbench::ClaudeTranslation

endProvider-specific capabilities require their own first-class support too. For example, extended thinking on Claude models, use a budget token mechanism with constraints that we need to handle explicitly. It's incompatible with forced tool_choice, which is how structured output works, so the two features require coordination:

# Extended thinking is incompatible with forced tool_choice (used by structured outputs).

thinking_enabled =

self.class.thinking_model?(model) &&

budget_tokens.present? &&

!parameters.output_structure.present?Building in monthly cycles

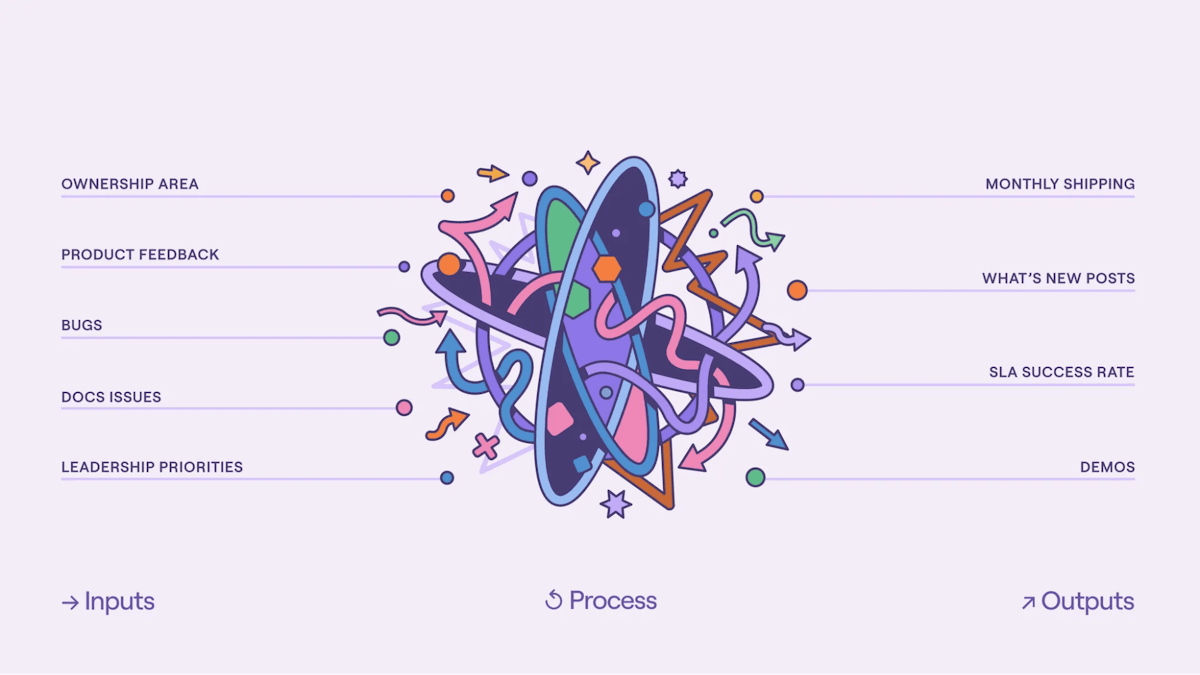

It's worthwhile to note that we didn't design any of this upfront; Tines doesn't operate from a traditional multi-year roadmap. We build in monthly cycles primarily driven by customer feedback.

Our first AI provider implementation was a simple text field for an OpenAI API key. Which then became a base URL field, allowing customers to point it at Azure OpenAI. Next came custom headers, so that we could allow our customers to connect to providers with different authorization requirements. We then provided certificate authority support for customers running self-hosted endpoints behind internal PKI.

Each evolution was inspired by actual customer needs. Our current abstraction emerged directly from this feedback, which is why it works so well. We're solving real problems experienced by real customers, not hypothetical ones.

What’s next

Tines customers can connect to any AI provider through our platform, whether cloud-hosted or self-hosted, standard or bespoke. A customer running an air-gapped deployment can configure a self-hosted endpoint with a custom certificate authority and route all AI calls through their own infrastructure, all without making infrastructure changes on their side.

Building iteratively and keeping the provider interface narrow meant we could add each new provider without breaking existing ones. The generator–client split kept feature development decoupled from infrastructure concerns. And the structured output trick—turning JSON schema enforcement into a tool-calling problem—gave us consistent behavior across providers that don't natively support response schemas.

Given the rapid rate of change in AI, we’re going to continue working on new standards (like MCP and Agent Skills) and ensure that our AI infrastructure continues to be as flexible as our customers.