AI has radically transformed the way SOC teams operate, but how is it affecting the people behind the work?

For our recent Voice of Security 2026 report, we surveyed over 1,800 global security professionals to find out. We wanted to understand not only AI’s impact on security careers, but how teams really feel about these shifts.

The results show that despite rising workloads and widespread burnout across security teams, sentiment toward AI is largely positive. Both leaders and practitioners feel optimistic about the opportunities ahead, and the data suggests AI will help relieve pressure points that may otherwise lead to attrition while shifting security work toward more strategic responsibilities.

Read on to learn how AI is reshaping security work and careers, and download the full report for more insights and actionable advice.

AI is widespread, but the manual work didn’t disappear

Today, 99% of SOCs use AI in some capacity. But despite widespread adoption, security teams still struggle with muckwork. Four out of five respondents (81%) say their workloads have increased over the past 12 months, with a third reporting the increase as "significant." Much of this consists of manual or repetitive work: on average, 44% of team time is spent on tasks that could be automated.

As a result, over three-quarters of security professionals (76%) have experienced burnout in the last 12 months. Heavy workloads are the main culprit, with 39% of respondents citing it as the primary cause behind their emotional exhaustion, reduced motivation, and mental fatigue. Repetitive tasks are also a major contributor, coming in joint second place alongside the stress of incident response (both 26%).

If left unaddressed, this could have consequences on retention.

Practitioners say that a good work-life balance is the most important factor helping them stay in their role, meaning that overwhelming workloads may have a direct influence on turnover.

But heavy workloads don’t just negatively impact team morale. They also introduce significant risk, increasing the likelihood of human errors and reducing capacity to respond quickly when – not if – urgent threats arise. Some 73% of security professionals believe their organization is likely to experience a significant cybersecurity incident in 2026, again compounding the pressure teams face and reinforcing how critical it is to address these issues sooner rather than later.

Security professionals are optimistic about AI’s impact on their careers

Against this backdrop, teams are feeling enthusiastic about the opportunities AI brings. The vast majority (86%) believe that AI will create new opportunities in the security job market, with 46% of these saying they’re “very optimistic.”

This optimism is even higher for burned-out teams: 65% of respondents who “frequently” experience burnout are very optimistic, a full 20 percentage points above average. Teams that currently spend 60% or more of their time on manual work are also highly optimistic about AI (56% say they’re “very optimistic”). This suggests that this cohort clearly recognizes how much it could change their day-to-day work.

Security teams recognize that AI excels at a range of critical jobs, like threat intelligence and detection, compliance and policy writing, identity and access monitoring, and phishing analysis.

Used as part of intelligent workflows, AI has the potential to remove repetitive, mundane tasks that eat up resources and burn teams out, freeing up analyst time and restoring the work-life balance they need to thrive.

The analyst role is becoming more strategic

As careers are reshaped by AI, new priorities emerge. Security professionals say the top skills for 2026 are:

AI literacy and prompt engineering (36%)

Cloud and infrastructure security (34%)

Automation and scripting (28%)

Threat intelligence and analysis (28%)

Data governance and ethics (25%)

These priorities reveal how roles are changing. Analysts will no longer execute every step. Instead, they must supervise, interpret outputs, and make informed decisions. This also reflects the shifting perceptions of security functions within their organizations, with 43% of respondents saying they’re viewed as a strategic enabler.

This evolution is only possible when practitioners step away from muckwork. If teams continue to spend 44% of their time – the equivalent of 3.5 hours of an 8-hour workday – on manual, repetitive tasks, they won’t have the necessary resources to focus on more strategic, higher-impact areas like proactive threat hunting and complex incident investigation.

A blended approach works best for modern SOC teams

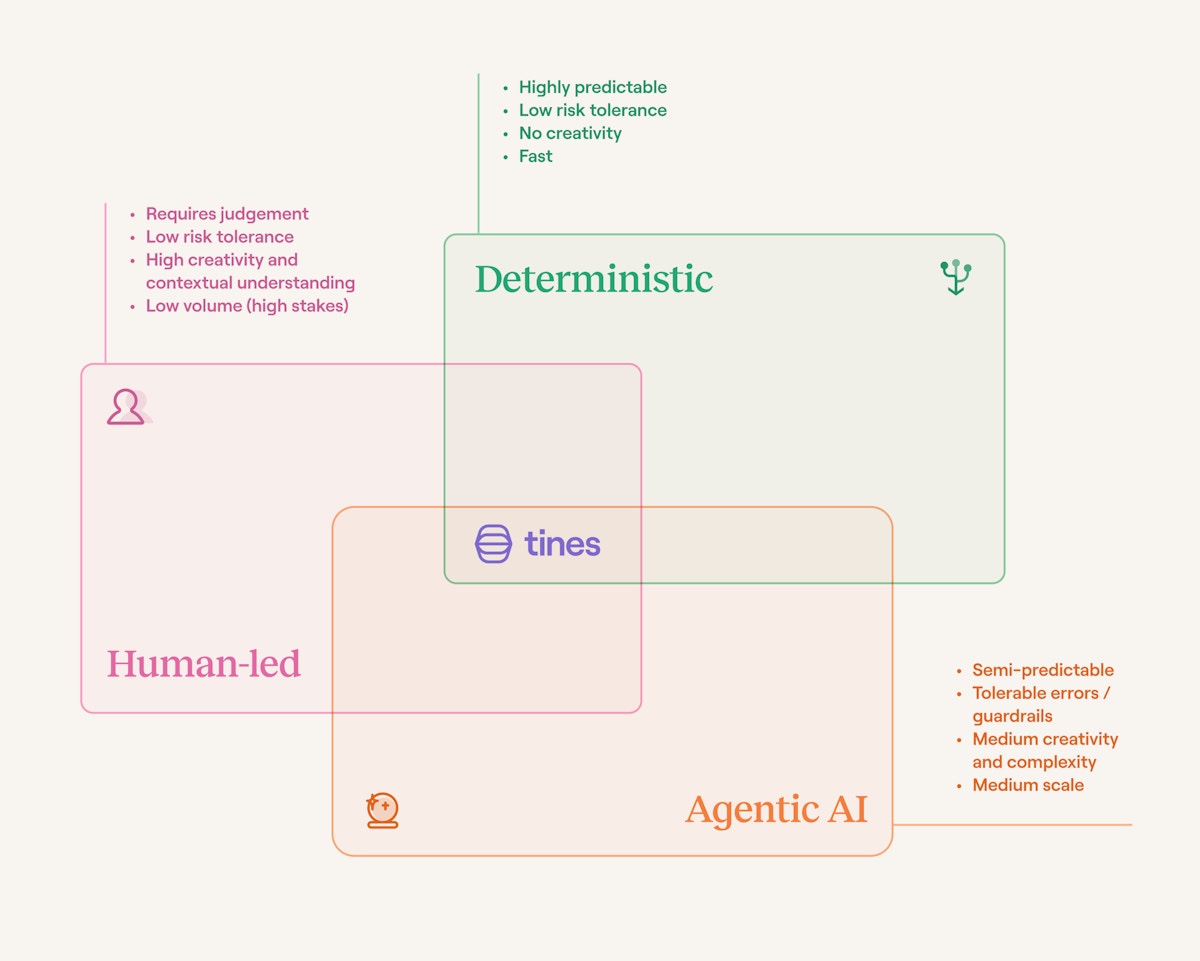

To function at maximum efficiency, teams need a blended approach that unites AI, automation, integration, and humans. This is where intelligent workflows come in. They combine three essential types of work: rules-based automation, agentic AI, and humans-in-the-loop to give security teams the flexibility they need to apply the right approach to each task.

What does this look like in practice?

AI agents respond to the inputs you provide, decide the next step, and act on it such as:

Analyzing and triaging alerts

Investigating alerts using context across tools

Drafting responses to common requests

Deterministic workflows handle highly predictable, reliable, and controlled tasks, like:

Compliance-heavy processes, such as user access reviews

Evidence collection

Incident documentation where auditability is critical

Destructive tasks requiring precise control, such as disabling user accounts or modifying firewall rules

Humans handle high-stakes and high-impact tasks that require judgement, creativity, and deeper context, like:

Strategic decisions

Additional analysis during important investigations

Policy reviews

Anomaly triage requiring contextual nuance

The result is a coordinated system where teams scale impact and tackle more high-impact work while maintaining compliance and visibility without burning out.

AI governance and human guardrails are crucial

The research found that formalized AI policies give teams an advantage: two-thirds (65%) of respondents with an active AI policy are "very confident” that AI outputs are subject to human-in-the-loop checks and other guardrails, compared to only 25% of teams with an AI policy in progress and 17% of teams without one in place.

As AI becomes an essential part of any SOC, governance and human guardrails remain critical to success. AI won’t, and can’t, replace security professionals. Organizations don’t intend it to. Instead, businesses are using AI to strengthen, not supplant, their teams, with 41% using it for specific tasks only and 37% implementing a human-in-the-loop approach.

Building a future-proof AI SOC

For many security teams, manual work continues to drain resources, increase risk, and cause burnout. But it doesn’t have to be this way, and professionals are enthusiastic about the opportunities AI unlocks for their careers.

The future SOC is a mix of human-led, deterministic and agentic workflows. Using intelligent workflows, leaders and teams can:

Protect security practitioners’ time and energy to reduce burnout

Scale security’s impact across the organization without additional headcount

Reshape their operations and free up more time to deliver strategic value

Want to learn more? Download the full Voice of Security 2026 report now to get even deeper insights and actionable tips to elevate your SOC.